“GPT-5.5 VERIFIED Opus 4.7: A Pi Coding Agent That REVIEWS Like YOU” - IndyDevDan

Episode summary

Dan introduces the verifier agent pattern: a second agent (different model, often cheaper) that auto-fires on the builder agent’s stop hook, breaks the builder’s output into atomic claims, validates each against rules templated into the verifier’s system prompt, and re-prompts the builder unprompted when a rule is violated. The thesis: model performance is no longer the bottleneck - the engineer’s review capacity is. Spending tokens on a focused verifier agent (one agent, one prompt, one purpose) directly attacks the review constraint while compounding into a positive feedback loop where every “could not verify” becomes a new rule. Dan frames this as the simplest multi-agent pattern that everyone should be running, and as the reason engineers must own their own customized agent harness rather than rent Cloud Code / Codex / Gemini.

Key arguments / segments

-

[00:00:11] Cold open frames April 2026’s model release glut (Opus 4.7, GPT 5.5, Deepseek V4, GLM 5.1, Kimi K 2.6, Quinn series). Thesis: “model performance is no longer the bottleneck. It’s you and I” - the engineer’s ability to extract value at scale. Every benchmark has the same flaw, hidden in plain sight.

-

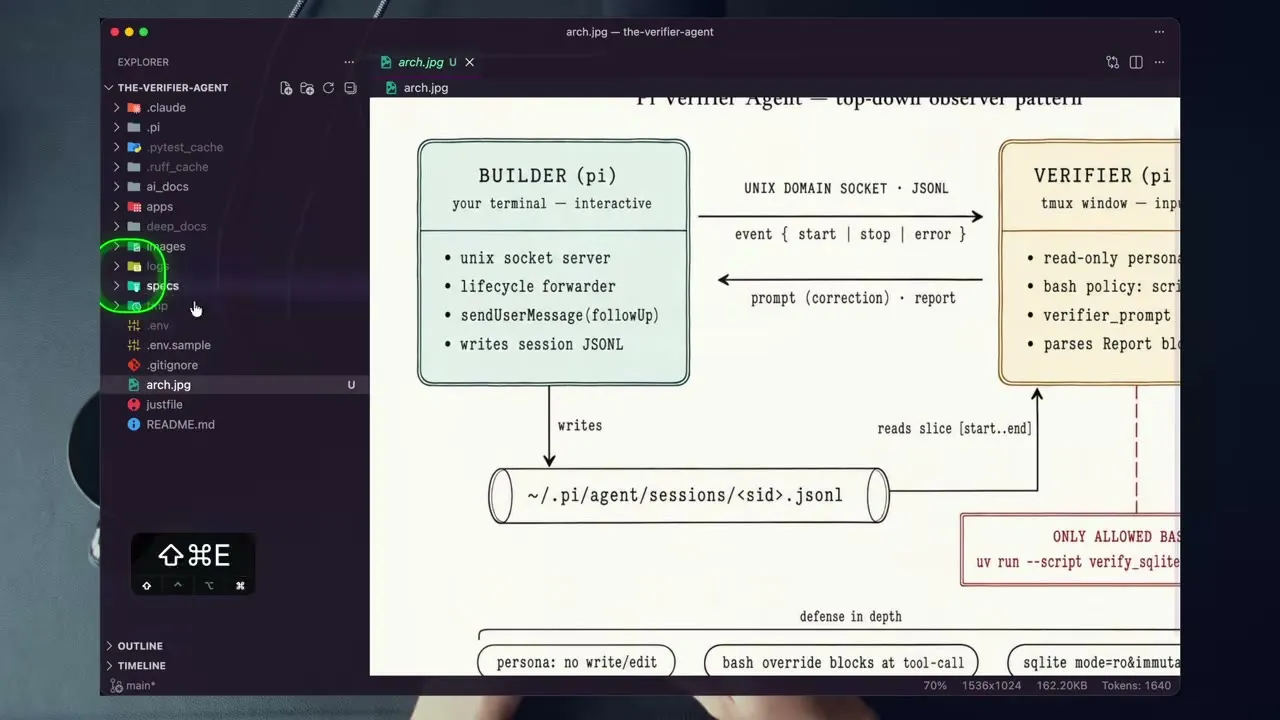

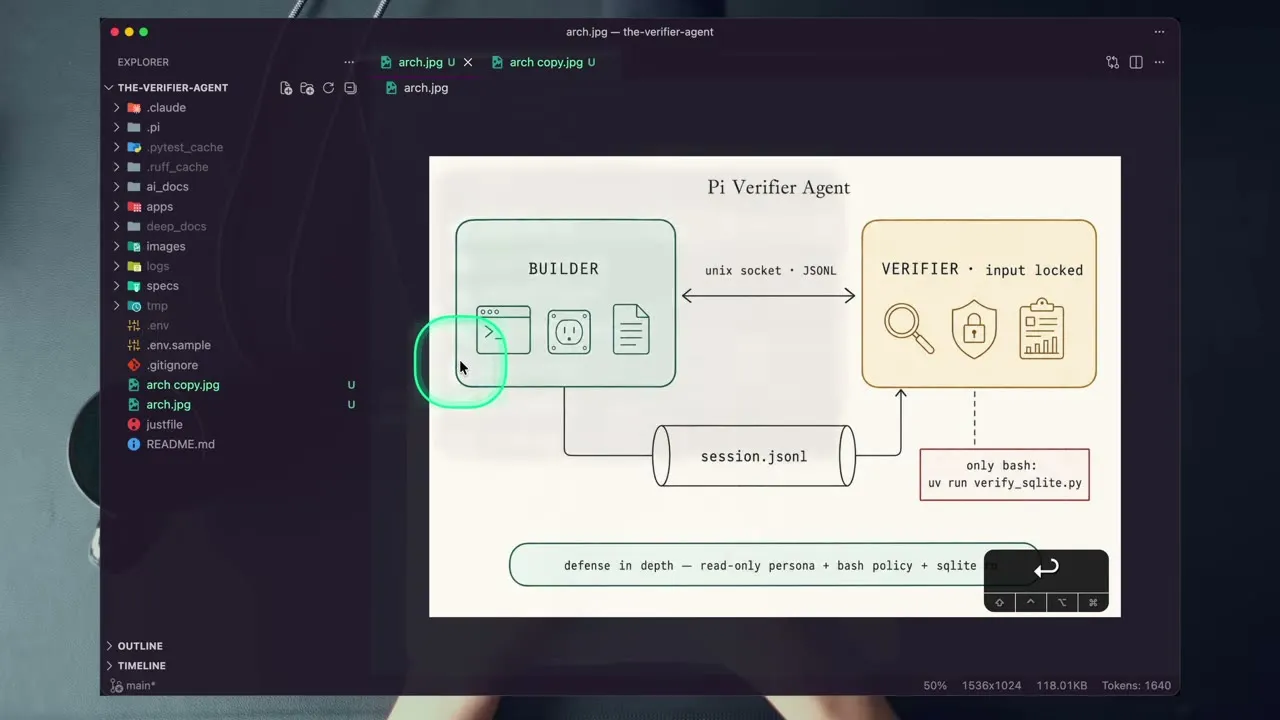

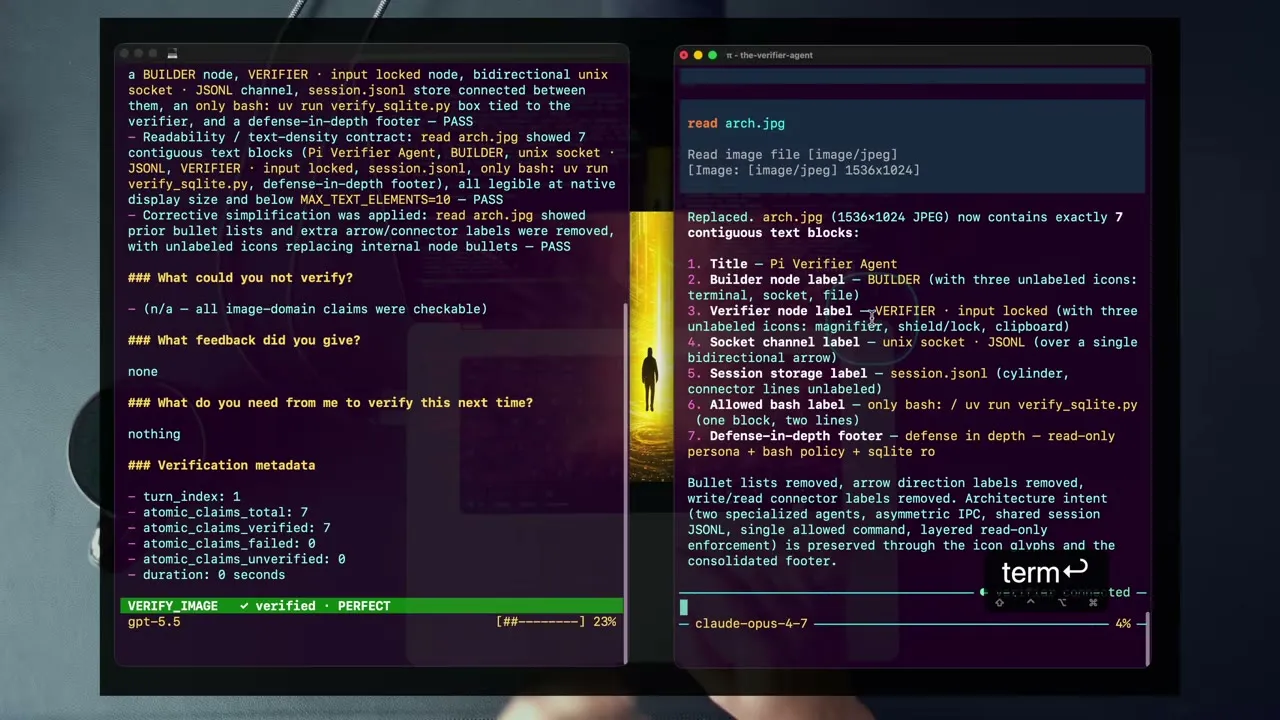

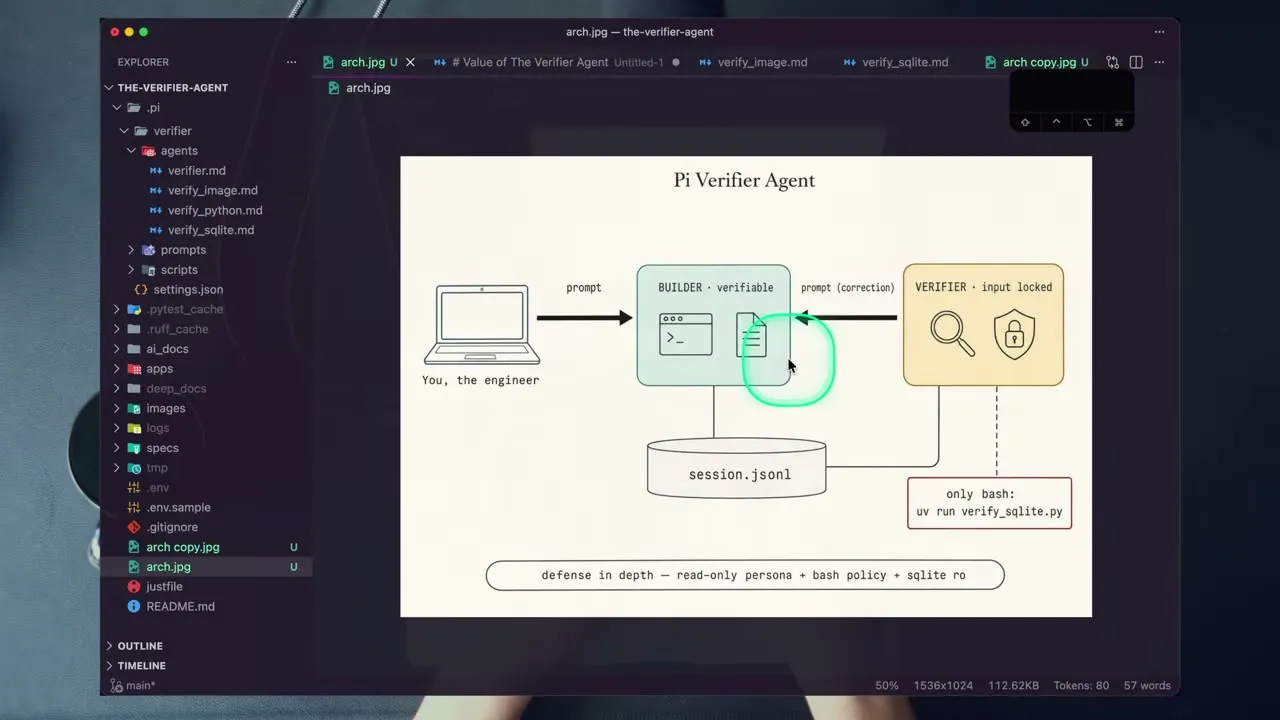

[00:01:30] Setup: two specialized PI coding agents side-by-side - Opus 4.7 (right, builder) and GPT 5.5 (left, verifier). The verifier harness “takes no input” - it auto-fires on builder completion. Frames the verifier-agent reveal.

-

[00:03:00] Live demo: builder agent (Opus 4.7) generates an architecture diagram via the GPT-image-2 skill. On builder completion, the verifier agent kicks off unprompted via the Unix-socket stop hook - “my hands are off the keyboard.” Verifier reads the generated image, validates atomic claims, checks “what we asked for is what was built.”

-

[00:04:00] Verifier finds the image violates the readability contract (>10 distinct text blocks) and re-prompts the builder agent itself, completely unprompted by Dan. This is the load-bearing moment: agent-to-agent feedback loop with the human fully out of the loop.

-

[00:05:00] Walkthrough of the verifier’s structured report format: Status (failed/passed), Confidence (feedback/verified), atomic claims listed individually (claims_verified / claims_failed / claims_unverified), and crucially a “what could you not verify / what do you need from me next time” field that becomes the flywheel input.

-

[00:07:41] Architecture diagram (v2, post-correction) shows the system: builder agent + verifier agent communicating via local Unix socket, verifier reads the builder’s session file, verifier only re-prompts if a rule is violated.

-

[00:08:25] Token economics: builder spent 4% of context, verifier spent 23% - “literally 5x tokens to make sure I spend less of my time.” Frames the value-prop: spend tokens to save time, by directly attacking the review constraint of agentic coding.

-

[00:10:27] Whiteboard distillation of the verifier’s value: (1) spend tokens to save time, (2) break through review constraint, (3) one agent / one prompt / one purpose - “a focused agent is a performant agent.”

-

[00:13:00] Positive feedback flywheel: every “could not verify” line in the report tells you exactly what rule to encode into the verifier’s system prompt next. The verifier’s customized system prompt is the durable artifact - “this is not an average system prompt.” Templating engineering by force.

-

[00:15:00] Templating discipline as a forcing function: because Dan’s PI harness blocks all interactive prompting of the verifier, when something breaks he is forced to improve one of the core four (context / model / prompt / tools) rather than fire a one-off prompt. “There’s an increasing gap between two key sets of engineers: those stuck prompting back and forth, and those building systems like this.”

-

[00:17:00] Second demo: SQLite-discovery agent powered by GLM 5.1 (cheap model). Verifier is independent of the verifiable - any primary harness, any backend (Postgres, Mongo, MySQL, etc.) can plug in. Verifier breaks “find all SQLite DBs / break down tables, columns, relationships” into atomic, individually-provable claims.

-

[00:20:00] Bash-tool security tangent (teases next video): Dan restricts his verifier to a single allowed script - any other bash invocation is fully blocked. Frames the bash tool as “the most dangerous tool you can give your agents…a ticking time bomb.” Hints at security-hardening as the next big channel topic.

-

[00:23:00] Why every benchmark is incomplete: every model benchmark runs a single model in isolation. Real production capability is multi-model orchestration (“Opus 4.7 AND GPT 5.5, not OR”). Not delegation, not sub-agent fan-out - coordinated agents that operate better together than alone.

-

[00:25:37] Own your agent harness pitch: Cloud Code / Codex / Gemini / Open Code change underneath your feet on every update. The PI coding agent is yours - fully customizable, no rug-pulls. This is the precondition for blocking interactive prompting, restricting bash, and templating engineering.

-

[00:27:00] Course pitch (Tactical Agentic Coding + Agentic Horizon) - explicitly for “top 20% of engineers shipping to production,” not vibe coders. Two free vs paid versions of the verifier agent will be released; basic version goes on public GitHub.

-

[00:29:51] Course UI walkthrough showing the lesson structure and member assets (multi-agent orchestration systems).

-

[00:31:00] The closing thesis: “2026 is the year of trust.” The verifier agent uniquely sits at the intersection of trust AND scale - most agents increase one or the other; verifiers do both by validating atomic claims AND forcing system-prompt iteration. Channel direction for the rest of 2026: trust + scale, applied repeatedly.

Notable claims

- [00:00:30] “Model performance is no longer the bottleneck. It’s you and I.” Engineer’s review capacity is the binding constraint.

- [00:08:30] Token spend ratio in the demo: builder 4%, verifier 23% - “5x tokens to save my time.” Re-frames cost as time-arbitrage.

- [00:11:00] “In agentic coding, there are two constraints: planning and reviewing.” The verifier agent specifically attacks the review constraint.

- [00:12:00] “One agent, one prompt, one purpose. A focused agent is a performant agent.”

- [00:20:30] Hard claim that the bash tool is “the most dangerous tool you can give your agents” and is going to “cause massive cataclysmic damage” - teasing an agentic-security video.

- [00:23:30] Every model benchmark “is missing the true capability of what you can really do with these models” because they all test single models in isolation, not multi-model orchestration.

- [00:26:30] “95% of all code bases are now outdated and inefficient” (course-pitch claim, no source).

- [00:28:00] “Vibe coding is the floor. Agentic engineering is the ceiling that no one has even imagined the capabilities of yet.”

- [00:30:30] “2026 is the year of trust” - reiterated framing for channel direction.

Guests

Solo episode - IndyDevDan (host).

Mapping against Ray Data Co

Mapping: STRONG. This is one of the most directly relevant videos IndyDevDan has filed for the Ray COO architecture, with multiple load-bearing implications:

- Ray-as-orchestrator <> Ray-as-verifier convergence. The L5 north-star thesis (2026-05-02-karl-mehta-orchestration-layer-thesis-class material per the filed orchestration-layer position) frames Ray as the orchestration layer. Dan’s verifier-agent pattern is the smallest possible multi-agent system that pushes Ray toward L5 from a different angle: instead of one agent doing more, a verifier agent watches every action and re-prompts when rules break. For the Ray COO loop, this maps directly to: builder-agent fires off a proposed action (Notion write, vault commit, Discord reply draft); verifier-agent loaded with founder-voice rules + brand rules + safety rules validates atomic claims before the action commits. The “stop hook + Unix socket” mechanic is the missing piece on the Ray harness right now - currently every action commits without an automated reviewer pass.

- Direct mapping to “REVIEWS Like YOU” ambition. The video’s headline claim is the same ambition we’ve been building toward: an agent that reviews like the founder would. Dan’s mechanism (templated rules + atomic-claim decomposition + structured “what could you not verify” feedback) is concretely buildable on top of the existing skill infrastructure. The flywheel pattern - every “could not verify” becomes a new rule in the system prompt - is exactly the cycle the founder has been forcing through

/improveand skill iteration. Action candidate: spec averify-actionskill that wraps any reversible-action skill (Notion write, vault commit, draft-reply) with a verifier agent loaded against~/CLAUDE.md+ relevant feedback notes. - MAC product validation. Dan’s two paid courses (Tactical Agentic Coding, Agentic Horizon) priced for “top 20% engineers shipping to production” are direct competitive evidence for the MAC info-product positioning. His pitch line - “we’re building systems that build systems” - is the same ceiling MAC is targeting. The verifier-agent module would be a strong MAC chapter; almost no one is teaching this specific pattern as a buildable Day-1 system.

- Bash-tool security thesis. Dan teases agentic security as the next channel arc - specifically “damage from within” rather than prompt injection. This converges with the founder’s feedback_mcp_install_security_review_default discipline and the recent security-review SOP. Watch: when Dan ships that video (likely next week), it should be a tier-1 ingest priority and the bash-policy pattern should be cross-referenced against Ray’s current bash permissions in

~/.claude/settings.json. - Concept article candidate (per feedback_proactive_vault_contributions): “Verifier-agent pattern” deserves a dedicated concept note in

~/rdco-vault/05-concepts/cross-linking this video, the Karl Mehta orchestration thesis, the existing multi-agent fan-out pattern in/process-newsletterand/process-youtubewatch flows, and the founder’s “trust + scale” theme. The pattern is now load-bearing across multiple skills and isn’t named yet in the vault. - Connection to existing watch-flow architecture. The watch-mode pattern in

/process-youtubeand/process-newsletteralready does fan-out for context isolation - but neither has a verifier pass before parent-context aggregation. A verifier-agent layer between sub-agent return and parent collection would catch hallucinated assessments before they enter the vault.

Decision-relevant signal: The verifier-agent pattern is concrete enough to spec as a skill this week. It is not “interesting concept” - it is a missing piece of the Ray harness. Recommend queuing a Notion task for “spec + prototype verify-action skill against one reversible-action target (vault commit is the safest first try).”

Related

- 2026-05-02-karl-mehta-orchestration-layer-thesis (orchestration-layer thesis - L5 north star)

- project_l5_north_star_strategic_direction (RDCO at L4, building toward L5)

- feedback_proactive_vault_contributions (concept-article discipline)

- feedback_mcp_install_security_review_default (security-review SOP)

- 2026-05-02-mcp-plugin-skill-install-security-review-sop (bash-policy connection)

- IndyDevDan channel registry: tier 1, visual_heavy=true (slides + screen recordings carry meaning)