“Google Invests $40B Into Anthropic, GPT 5.5 Drops, and Google Cloud Dominates” — Moonshots EP #252

Episode summary

Weekly Moonshots panel cataloging an unusually dense 8-week stretch of frontier-model releases: 15 major model drops including Kimi K2.6 (open-weight, ~$4.6M training cost), GPT-5.5 (codex-focused, 60% hallucination drop, terminal-bench gains), DeepSeek V4. The bigger structural story is the capital-for-compute carousel: Google committed $40B to Anthropic ($10B cash now at a $350B mark, 5 GW TPU compute over 5 years); Amazon committed $33B in cash for ~$100B of AWS spend over a decade plus 5 GW. Wissner-Gross frames every Anthropic move (Project Deal marketplace, Claude Code, Skills) as one consistent strategy: maximize economic value per token, with codegen as the dominant per-token earner. Episode also covers the JoBy air-taxi NYC flight, Tesla Cybercab production start, world-ID/Orb verification getting integrated into Zoom (deepfake losses now $1B annually, projected $40B by 2027), OpenAI Chronicle screen-monitoring agents, and a UAE government-wide agentic-AI mandate (50% of govt services on agentic AI within 2 years).

Key arguments / segments

-

[00:00:00] Cold-open headline reel: Google’s $40B Anthropic investment, TSMC as the actual bottleneck (only Elon talks about it), Google Cloud “dominating” with 8th-gen TPUs (TPU 8T training / 8i inference), GPT-5.5 framed as a Codex-strengthening release, “math is cooked” — frontier-math benchmarks creeping up ~1%/month.

-

[00:03:30] “15 major model releases in 8 weeks” — pace of two majors per week. Wissner-Gross: now down to a three-way Western frontier race (OpenAI, Anthropic, Google). Cites Noam Brown wondering aloud whether weights matter as much as they used to, given that compute-driven inference-time reasoning is becoming the dominant axis. Open-source Chinese frontier (Kimi, DeepSeek) trailing closed-source US frontier by ~3-6 months.

-

[00:14:00] Kimi K2.6 (Moonshot AI): trillion-parameter open-weight MoE, 32B active params, 300 parallel agents, native text/image/video, 30x cheaper than top closed models, trained for ~$4.6M. Benchmarks beat Opus 4.6. Dave: 1/8 the cost of Claude API at Fireworks, ~1/30 self-hosted. Caveat: code-injection trust risk vs Anthropic/OpenAI guardrails. Wissner-Gross: helpful for self-hosting and fine-tuning, but not at the frontier — the US closed-weight / China open-weight disparity is structural for now.

-

[00:24:50] GPT-5.5 (released 7 weeks after 5.4): native omnimodal text+audio+video+images in single end-to-end architecture; 37-point gain on long-context reasoning at 1M tokens; 40% fewer tokens for same latency; hallucination down 60% over 5.4. Wissner-Gross: biggest leap in terminal-bench 2.0 (the agentic-CLI benchmark for Codex/Claude-Code-class environments) — “very much feels like a release intended to strengthen OpenAI’s Codex,” i.e. a direct shot at Claude Code’s market. Frontier-Math Tier 4 up ~2% in 2 months → projecting all professional-grade research math solved in 4-5 years. API pricing 2x of 5.4 ($5/$30 per M in/out).

-

[00:31:10] Google ecosystem: Sundar at Google Cloud Next 2026 — TPU 8T (training) and TPU 8i (inference), 3x faster training, 80% better perf/$, designed for “millions of agents in real time.” Sundar: 16B tokens/min processed, 75% of Google’s code now AI-written. Wissner-Gross: Epoch reports Google now ~1/4 of all AI compute on the planet; TPUs being designed by TPUs (recursive self-improvement down to silicon). Plus 960k Nvidia Vera Rubin GPUs for A5X — 2x the size of Colossus 2, 2.4x of Stargate Abilene.

-

[00:37:00] Capital-for-compute carousel. Google → Anthropic: $40B total ($10B cash now at $350B valuation — note Anthropic on secondaries is at $1T, so the strategics are buying at ~1/3 going price), 5 GW TPU committed over 5 years, $30B more if perf targets hit. Amazon → Anthropic: $33B total (+$25B on top of prior $8B), Anthropic commits $100B+ AWS spend over 10 years, runs Claude on Trainium, 5 GW capacity. Anthropic privately telling investors $40-70B revenue trajectory by year-end (vs the $100B mentioned a few pods back). Compute is the only thing capping their growth.

-

[00:39:30] Salim’s bottleneck argument: “It’s all bottlenecked at TSMC… only Elon will talk about it.” Jensen says he has no long-term agreement with TSMC; only Samsung/Intel/TSMC can fab any of this. Wissner-Gross adds powered-land + on-site energy permitting is the more immediate (next 12 months) bottleneck; TSMC dominates beyond that, especially under any geopolitical stress. Investment logic: anyone with chip access + power supply (legacy aluminum/manufacturing electrical capacity converting to data centers); kernel-level software companies that improve perf on AMD/legacy GPUs or squeeze more out of Nvidia.

-

[00:46:20] “What did Claude just kill?” — Project Deal marketplace. Anthropic ran a Claude-operated marketplace internally (Claude buying/selling/negotiating on behalf of employees). eBay stock dipped briefly. Wissner-Gross’s load-bearing claim: every Anthropic strategic move — Project Deal, Claude Code, Skills, all of it — reduces to one principle: maximize economic value per token. Per-token, generating useful working code is more valuable than generating video or cat images, which is why everyone is converging on codegen and OpenAI dropped consumer-Sora-style chasing. (Quote as paraphrased; see transcript.)

-

[00:54:40] Elon vs Sam OpenAI trial begins in Oakland Federal Court. Two-phase structure (liability then awards). Wissner-Gross flags reported political-influence concerns in jury selection and that the district judge will treat the jury verdict as advisory and decide final award from the bench. Dave: “Elon doesn’t have to win the case — he just has to slow OpenAI down. In the middle of a singularity, losing 3 months means you lost.” Texts already in discovery.

-

[00:59:30] OpenAI Chronicle — agentic background process taking periodic screenshots, extracting context via OCR + visual analysis, building structured memory files. Sam called it “telepathy.” Wissner-Gross: Microsoft’s “Recall” was killed for the same reason; this is an “architectural cluge” — wants to be built into the OS / secure enclave, not bolted on. Privacy panic is symptomatic of the bad architecture, not the underlying capability.

-

[01:07:30] World ID + Orb integration into Zoom. Backstory: 2024 Arup deepfake video-call scam = $25M wire fraud (Hong Kong CFO impersonation). Deepfake fraud losses: $130M (2019-23) → $400M (2024) → $1B (2025) → projected $40B by 2027. Orb (retina scan) gets you a “verified human” badge on Zoom calls. Grok now generates AI French women with reflective ID in their hands convincingly. Dave’s anecdote: his own controller wired $300K to China during a company meeting on a fake Dave instruction; ~$75K unrecovered.

-

[01:14:00] “Token-maxing” as a status/vanity metric (404media report) — startup CEOs bragging about token spend exceeding human payroll. Dave defends it: get in the race now, optimize later. Salim reframes as “ratio of tokens to compressed iteration cycles” rather than raw spend. Wissner-Gross: at frontier labs already 1:2 humans:compute, trending toward 1:infinity. Capitalism will force this.

-

[01:18:30] UAE: 50% of govt services on agentic AI within 2 years. Salim was the test case for a 5-hour golden-visa issuance (vs 5 days in Singapore). Sheikh Mohammed: “AI is no longer a tool. It analyzes, decides, executes and improves in real time.” Question raised: can Western democracies keep up?

-

[01:23:30] OpenAI ChatGPT for Clinicians — free to all US clinicians, 59 vs 43.7 vs human doctors on HealthBench. Wissner-Gross: “the professions are cooked.” Predicts the same dropping for law, management consulting, financial work — OpenAI mapped the knowledge-work verticals via GDPVal, fine-tuning per-vertical from there. Strategic read: OpenAI uses these vertical free releases as distribution channels into biomed enterprise (where the real money is) plus a data-aggregation moat.

-

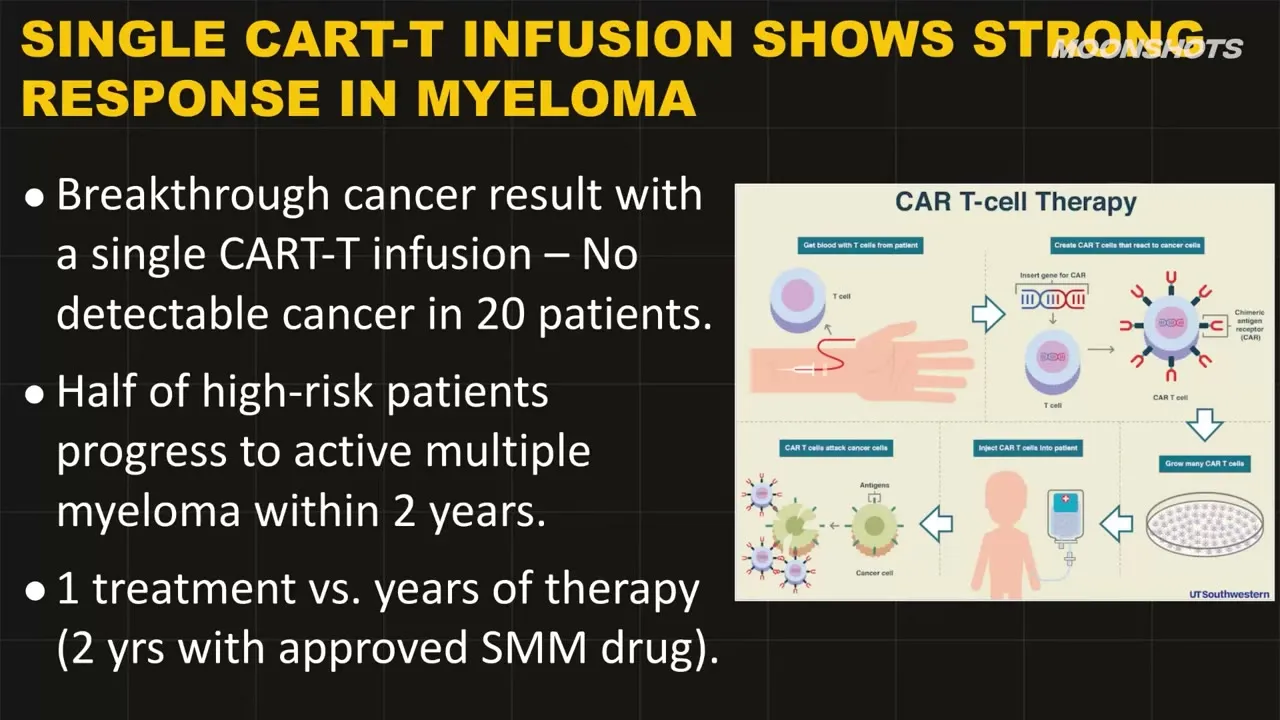

[01:32:00] Biomedical breakthroughs cluster: TopHeart (AI for donor-heart viability, ~500 add’l hearts/yr); pancreatic cancer mRNA vaccines (87.5% 6-yr survival in responders vs historical 13% for pancreatic); single-shot CAR-T showing 100% MRD-negative in melanoma; candesartan (BP drug) repurposed for MRSA via AI screening. David Fagenbaum’s Every Cure (using AI to map 4,000 FDA-approved drugs against 18,000 known diseases) flagged as the citizen-science model.

-

[01:47:00] Robotics shipping cluster: Pingpong robot ACE wins 3/5 vs human champion (Wissner-Gross: “it’s astounding this took so long” — only handful of degrees of freedom); Tesla Cybercab production started April 24, $30K target, 17 moving parts in drivetrain (vs ~2,000 in ICE), 2M/yr production goal; Joby Aviation first NYC eVTOL air-taxi flight (JFK to W30th in 7 min vs >1hr drive), 100x quieter than helicopter.

-

[01:56:30] AMA segment. Standout answers: (1) consulting firm future = “intelligence platform + domain expertise + change management,” not a junior-pyramid; (2) creative-ideation problem solved by AI taste-curation funnels (Wissner-Gross plugs his portfolio co Henry / “meet Henry.ai”); (3) entry-level jobs vanishing is “fine — the apprenticeship model was a holdover anyway, build-in-public + GitHub-as-resume is the new path”; (4) p(doom): Musk/Hinton 10-20%, Amodei 25%, Altman ~10%; rationale for racing forward = “stopping won’t stop China.”

Notable claims

- [00:25:30] GPT-5.5: hallucination down 60% over 5.4; 40% fewer tokens at same latency; 1M-token context retains usable beginning-of-context recall.

- [00:26:30] GPT-5.5’s biggest jump is on terminal-bench 2.0 — Wissner-Gross’s read: a deliberate Codex/Claude-Code competitive shot.

- [00:27:30] Frontier-Math Tier 4 climbing ~1% per month → ~50% solved now → 100% in 4-5 years at current rate.

- [00:33:30] Epoch: Google now accounts for ~1/4 of all AI compute on the planet.

- [00:37:30] Google → Anthropic: $40B total commitment, $350B entry valuation vs $1T secondary market = strategics buying at ~1/3 going price.

- [00:38:30] Amazon → Anthropic: $33B cash for $100B+ committed AWS spend over a decade + 5 GW.

- [00:38:30] Anthropic privately projecting $40-70B revenue by end of 2026, capped only by compute availability.

- [00:53:30] Wissner-Gross’s unifying frame: every Anthropic move = “maximize economic value per token.” Codegen wins per-token economics → universal codegen convergence across labs.

- [01:08:30] Deepfake-fraud losses: $130M (2019-23) → $400M (2024) → $1B (2025) → $40B projected by 2027.

- [01:18:00] UAE: 50% of all government services on agentic AI within 2 years.

- [01:24:00] OpenAI ChatGPT for Clinicians: HealthBench 59 vs 43.7 humans, validated on 700K responses.

- [01:36:30] Pancreatic cancer mRNA vaccine: 87.5% (8 of 16) immune-responder patients alive at 6-yr median follow-up vs 13% historical.

Guests

- Peter H. Diamandis — host, founder XPRIZE / Singularity University / A360 / Fountain Life

- Salim Ismail — founder OpenExO, ex-Singularity exec director (joined from Guadalajara airport)

- Dave Blundin — founder & GP Link Ventures, MIT

- Alexander Wissner-Gross — computer scientist, founder Reified, writer of “Innermost Loop” Substack (alexw.org)

Mapping against Ray Data Co

Three load-bearing connections to active RDCO positioning:

-

GPT-5.5 confirms the agent-deployer-thesis evidence cluster. This release is exactly the watch-list signal: terminal-bench 2.0 is the explicit benchmark for Codex/Claude-Code-class agentic CLI work, and that’s where 5.5 makes its biggest single jump. Wissner-Gross’s read — “this is OpenAI strengthening Codex, deliberately” — confirms the agent-deployer arena is now the explicit two-frontier-lab battleground (was already the Anthropic thesis; OpenAI is now publicly contesting it, not chasing consumer plays). Combined with the ChatGPT-for-Clinicians release (vertical knowledge-work agent rollouts mapped via GDPVal), this is OpenAI executing the same play 2026-04-14-levie-agent-deployer-role-jd foresaw — but now on the operator side, not the deployer side. Substrate-threat read does not change — both labs converging on agent-deployer as their main commercial vector strengthens (not weakens) the thesis that the human role is “agent-deployer” in the next 18-36 months.

-

Wissner-Gross’s “maximize economic value per token” frame is the cleanest explanation yet for why every frontier lab is converging on codegen. It also explains the SaaS-kill cycle from a labs-perspective: it’s not malicious, it’s per-token economics. Worth a Sanity Check angle on “the per-token economic gradient” as the actual force shaping which categories AI eats first. Cross-link to 2026-04-01-every-saas-dead-linear and 2026-04-01-stratechery-axios-attack-claude-code-leaked-security.

-

Compute-as-strategic-currency. Google buying Anthropic equity at 1/3 the secondary market price in exchange for TPU commits, Amazon doing the same with Trainium — this is the structure that will determine who can build agent-deployer infra at scale. RDCO does not need to pick a winner here, but the fact that Anthropic is locked into both AWS and GCP simultaneously is meaningful for any Anthropic-dependent product (Squarely’s Claude usage, the COO agent’s own Claude budget). Single-vendor-Anthropic risk just dropped materially — Anthropic literally cannot be cut off from compute by either hyperscaler without the other backing them.

Secondary connections (worth noting, not load-bearing):

- UAE 50%-agentic-government goal is a real-world existence proof for the agent-deployer playbook at govt scale — useful evidence point if the founder ever pitches deployer tooling to public-sector buyers.

- World ID / Orb on Zoom validates the deepfake-defense layer becoming a real budget line. Adjacent to but not direct to current RDCO bets.

- Token-maxing as vanity metric — useful Sanity Check material on how the discourse is misframing “AI productivity” with raw spend, which Salim correctly reframes as compressed iteration cycles.

Related

- 2026-04-14-levie-agent-deployer-role-jd — Levie’s agent-deployer JD framework; this episode confirms OpenAI is now publicly playing in that arena

- 2026-04-01-every-saas-dead-linear — Linear / SaaS-death thesis; per-token economics frame from this ep is the cleanest causal explanation

- 2026-04-01-stratechery-axios-attack-claude-code-leaked-security — Claude Code as the per-token monetization vehicle

- 2026-04-01-karpathy-llm-knowledge-bases — knowledge-base layer that codegen agents consume

- 2026-04-02-moonshots-ep-uber-robotaxi-dara-khosrowshahi — prior Moonshots, robotaxi positioning continuity for Cybercab segment

- 2026-01-29-moonshots-ep226-cathie-wood-ai-bitcoin — prior Moonshots compute-thesis episode

- 2025-11-17-moonshots-ep208-ai-costs-plummeting — token-economics longitudinal